home |

stacks treatise index |

1. Intro: stack basics |

2. subroutine return addresses & nesting |

3. interrupts |

4. virtual stacks |

5. stack addressing |

6. passing parameters |

7. inlined data |

8. RPN operations |

9. RPN efficiency |

10. 65c02 added instructions |

11. synth instructions w/ RTS/RTI/JSR |

12. where-am-I routines |

13. synthesizing 65816 stack instructions |

14. local variables, environments |

15. recursion |

16. enough stack space? |

17. forming program structures |

18. stack potpourri |

19. further reading |

A: StackOps.ASM |

B: 816StackOps.ASM |

Appendix C

6502 STACKS TREATISE

RPN efficiency

Decades ago, there were calculator wars between the AOS crowd and the RPN crowd, especially TI versus HP. The TI fans could really

only argue that AOS was supposedly more intuitive (as mentioned near the beginning of the last section), while the HP fans could tout RPN's

reduced number of keystrokes, program steps, and variables needed for any given job. In contests, the RPN boys routinely got the job

done faster. TI supplied more memory for the money (which is why I started with a TI-58c), but HP gave more quality and every other

desirable trait, including, in the HP-41, potentially a lot more total memory than the TI-59 offered—just at a higher price. When

I went from the TI-59 to the HP-41cx (both shown at right), I definitely felt all of these effects. I never read what took place in

board meetings after that, but TI must have seen that there was no hope of winning the favor of the professionals, and the result was that

TI went after the school market while HP kept the professional market.

Decades ago, there were calculator wars between the AOS crowd and the RPN crowd, especially TI versus HP. The TI fans could really

only argue that AOS was supposedly more intuitive (as mentioned near the beginning of the last section), while the HP fans could tout RPN's

reduced number of keystrokes, program steps, and variables needed for any given job. In contests, the RPN boys routinely got the job

done faster. TI supplied more memory for the money (which is why I started with a TI-58c), but HP gave more quality and every other

desirable trait, including, in the HP-41, potentially a lot more total memory than the TI-59 offered—just at a higher price. When

I went from the TI-59 to the HP-41cx (both shown at right), I definitely felt all of these effects. I never read what took place in

board meetings after that, but TI must have seen that there was no hope of winning the favor of the professionals, and the result was that

TI went after the school market while HP kept the professional market.

An advantage to RPN is that there are no parentheses, and almost no syntax requirements. There

are no precedence rules either, as intermediate values that are waiting their turn to get involved in the calculation again

are held on the stack. Higher-level programming languages frequently (usually?) use RPN internally; but giving only an algebraic user

interface increases the overhead of parsing equations, which is apparently partly why Hewlett-Packard and other calculator companies started

using RPN early on, to achieve greater efficiency with the hardware limitations of the day. It required a little new learning on the

part of the user, but once he started thinking in RPN, he usually comes to prefer it.

RPN (first discussed here in Section 4) also has the already mentioned implicit parameter-passing which takes no

additional computing effort, and the fact that there's no need to ever re-mirror any parameters when passing them on the data stack from one

subroutine to another nested one. (Side note: There's an RPN primer

here that's oriented to RPN calculators with a 4-level stack.)

RPN's code-density advantage may not seem that important today with cheap memory; but the 6502 is

still limited to a 64K address map. With extra hardware for banking, you can have a window into a much larger memory space, but it is

not nearly as graceful as what the 65816 does, which in turn is not as graceful as how some other processors handle a larger memory

space. I tend to mention the Forth programming language a lot here since it is heavily stack-oriented and I have used it since 1990

on the workbench for very quick software development. It gives excellent code density, and companies have often found

that it allows them to use cheaper hardware and still get better performance as well as much

faster software development than, say, C. When I want maximum performance or speed, it is very easy to go into assembly for

a piece of code, then come back to high-level Forth. I can take advantage of Forth's interactiveness and higher

language level to develop a proof-of-concept word (subroutine) first in Forth, then re-write it in assembly for maximum performance without

having to change any of the code that calls it.

It is not my goal here to get you into Forth per se; but if you really take advantage of what can be done with a data stack on a

6502, you'll be half way there anyway, especially since I've retained the names of the routines as much as possible. There are

several ways to implement Forth threading. When we run

all these subroutines for handling 16-bit data on the data stack, we are getting close to subroutine-threaded code (STC) Forth. Since

it takes three bytes to call a subroutine, it does not seem as efficient as other methods which mostly take only two (being mostly a list of

addresses); but Bruce Clark explains how the faster-running STC

Forth avoids the expected memory penalties. He gives 9 reasons, starting in the middle of his long post in the middle of the linked

page. STC of course eliminates the need for NEXT, nest, and unnest, thus

improving speed compared to other methods, too.

Stack computers (see here and

here) have taken advantage of the stated efficiency

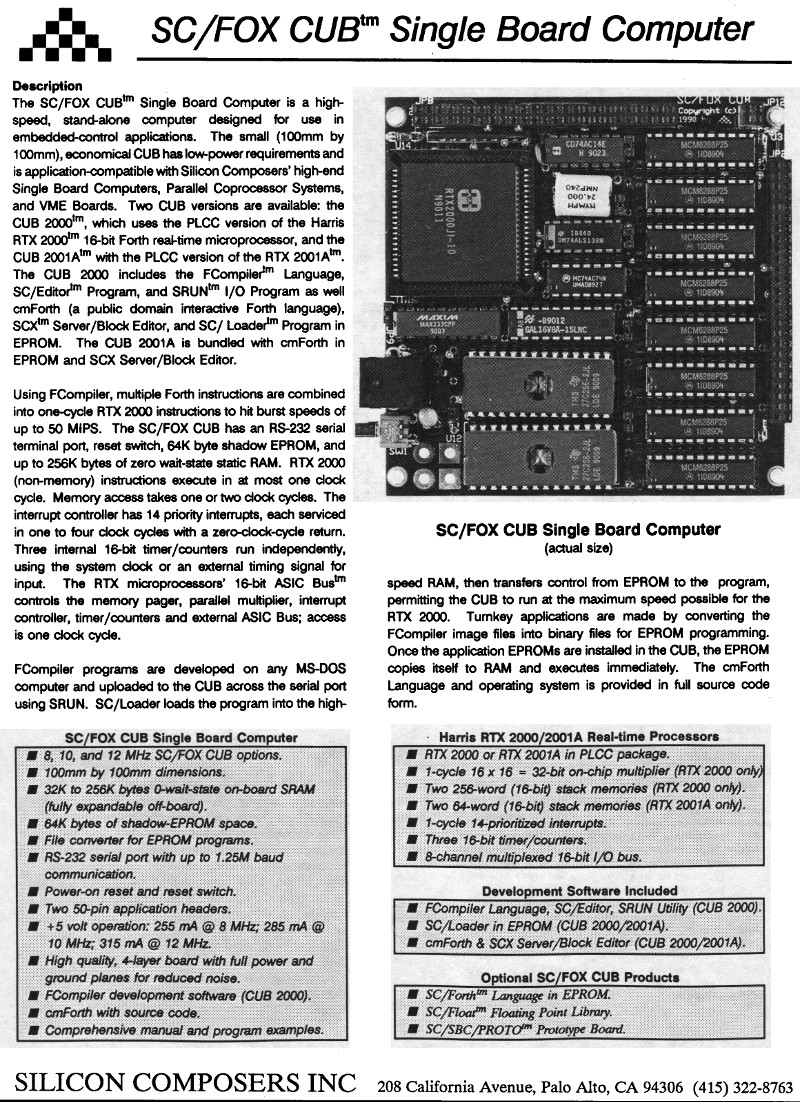

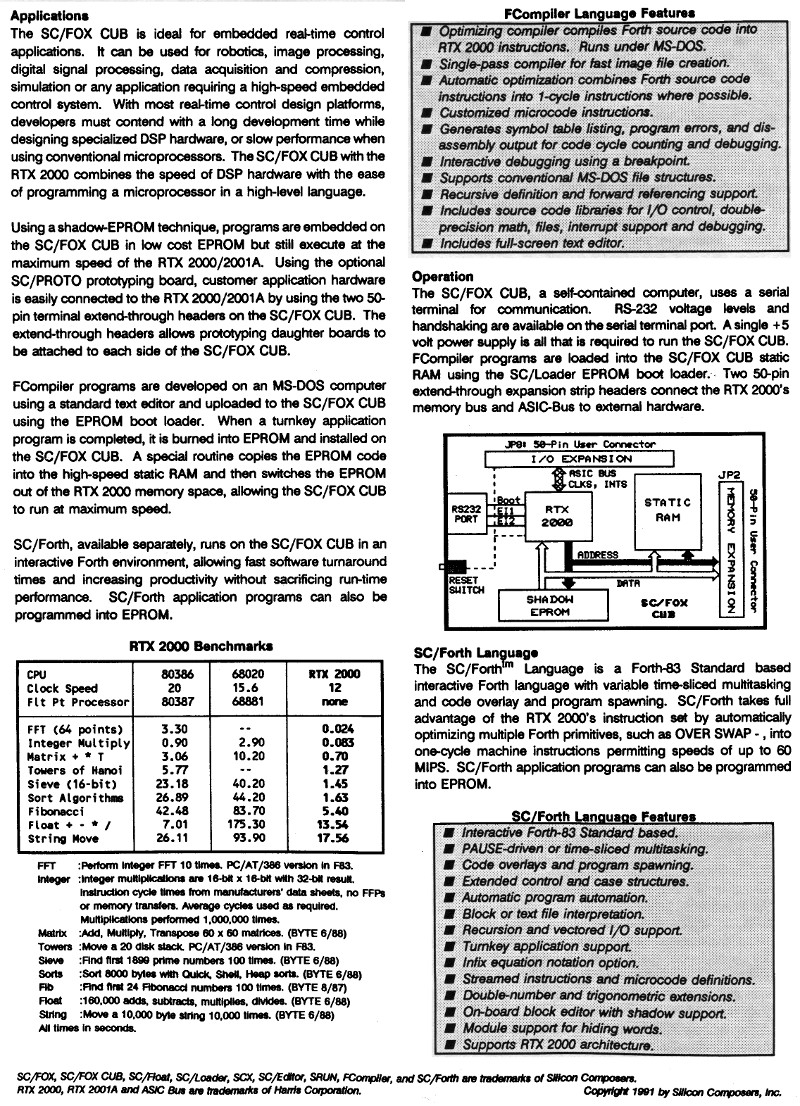

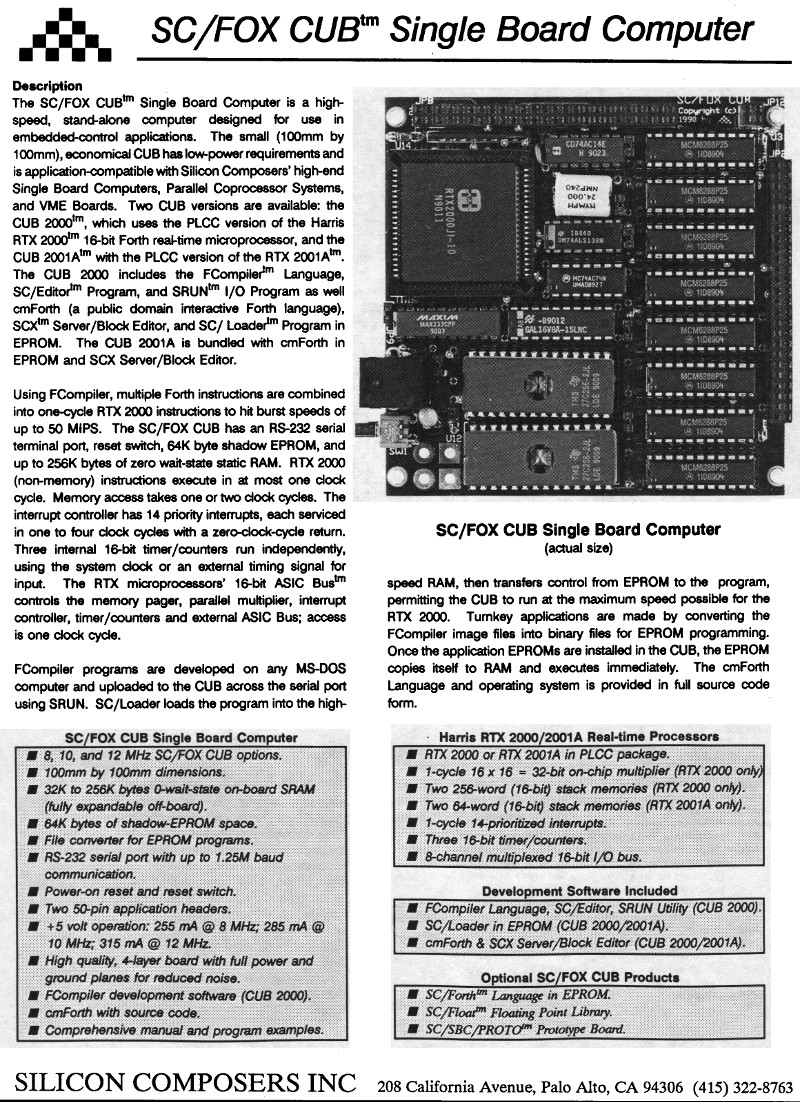

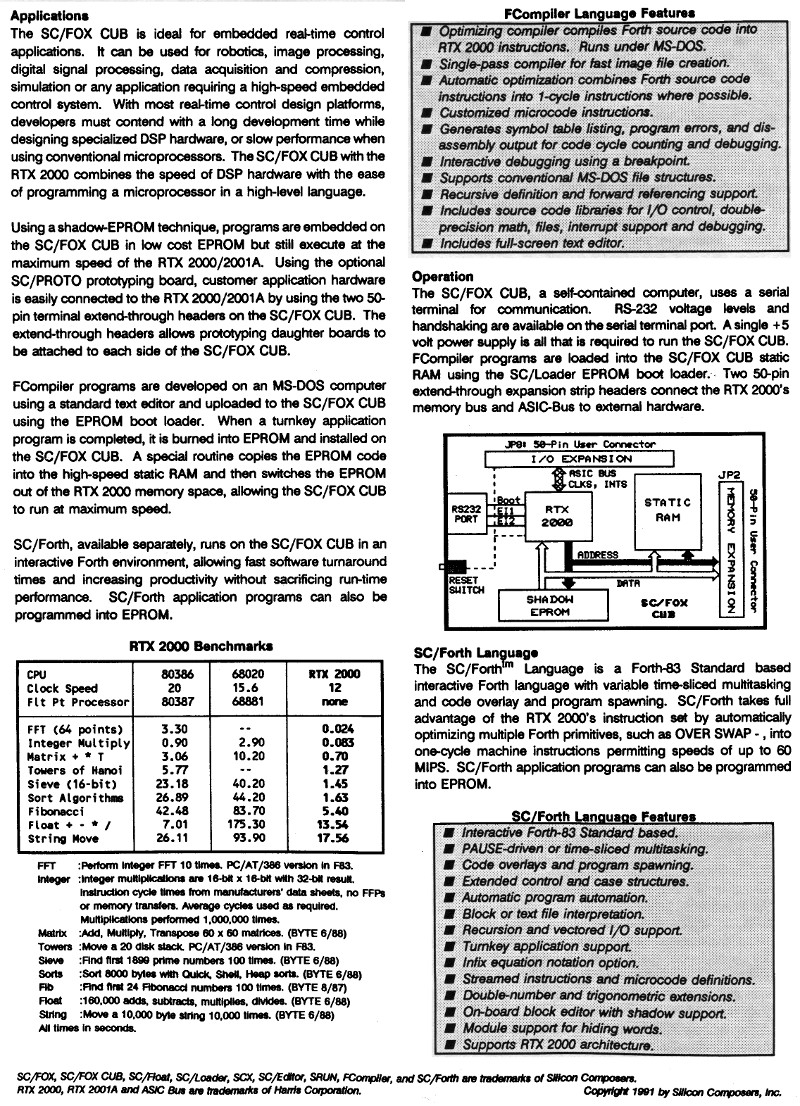

to get lots of computing power in a relatively simple (ie, low gate count) processor. I kept an advertisement from 1991 for the Silicon

Composers SC/Fox Cub SBC that used the Harris RTX2000 stack processor. Scans of the two pages are below. Briefly, it routinely does

16MIPS (16 Million Instructions Per Second) at 12MHz, and 50-60MIPS burst. Those are essentially Forth MIPS

too, since its machine language basically is Forth, meaning that a 6502 running ITC (indirect-threaded code) Forth would have to run

at over 1GHz to keep up. You can see in the performance comparison table that the 12MHz RTX2000 did a 64-point FFT 137 times as fast as

the 20MHz 80386 did, and Sieve benchmark 16 times as fast as the 80386.

From another ad, I see an interrupt took four clocks, while return-from-interrupt took zero.

...And that brings up another point: interrupt-service efficiency. As long as your main program and subroutines

don't eliminate data-stack bytes before the last access to them, you can interrupt a program at any point, and the ISR can use the same stacks

and the same X-register data-stack pointer, without having to save anything except A and Y. The only exception I can think of is that if

the ISR does use the data stack, you should disable interrupts the few times when X contains something other than the data-stack pointer, so you

don't overwrite something that another part of the program still needs. This should require little additional explanation for assembly

language; but related, for Forth, I have an article on

zero-overhead interrupt service in high-level Forth on

65C02.

The newest interest of Chuck Moore (the inventor of Forth who turns 86 this year, 2024) seems to be

GreenArrays where he has 144 stack processors on a single IC, for massively

parallel embedded applications. Each of the processors on the IC runs Forth instructions in as little as 1.4ns, putting equivalent peak

performance at 100GIPS, or 100,000,000,000 Forth instructions per second.

8. RPN operations <--Previous |

Next--> 10. 65c02 added instructions

last updated Apr 29, 2024

Decades ago, there were calculator wars between the AOS crowd and the RPN crowd, especially TI versus HP. The TI fans could really

only argue that AOS was supposedly more intuitive (as mentioned near the beginning of the last section), while the HP fans could tout RPN's

reduced number of keystrokes, program steps, and variables needed for any given job. In contests, the RPN boys routinely got the job

done faster. TI supplied more memory for the money (which is why I started with a TI-58c), but HP gave more quality and every other

desirable trait, including, in the HP-41, potentially a lot more total memory than the TI-59 offered—just at a higher price. When

I went from the TI-59 to the HP-41cx (both shown at right), I definitely felt all of these effects. I never read what took place in

board meetings after that, but TI must have seen that there was no hope of winning the favor of the professionals, and the result was that

TI went after the school market while HP kept the professional market.

Decades ago, there were calculator wars between the AOS crowd and the RPN crowd, especially TI versus HP. The TI fans could really

only argue that AOS was supposedly more intuitive (as mentioned near the beginning of the last section), while the HP fans could tout RPN's

reduced number of keystrokes, program steps, and variables needed for any given job. In contests, the RPN boys routinely got the job

done faster. TI supplied more memory for the money (which is why I started with a TI-58c), but HP gave more quality and every other

desirable trait, including, in the HP-41, potentially a lot more total memory than the TI-59 offered—just at a higher price. When

I went from the TI-59 to the HP-41cx (both shown at right), I definitely felt all of these effects. I never read what took place in

board meetings after that, but TI must have seen that there was no hope of winning the favor of the professionals, and the result was that

TI went after the school market while HP kept the professional market.